The AI Coding Illusion - Why Vibe Coding Falls Apart After the Demo

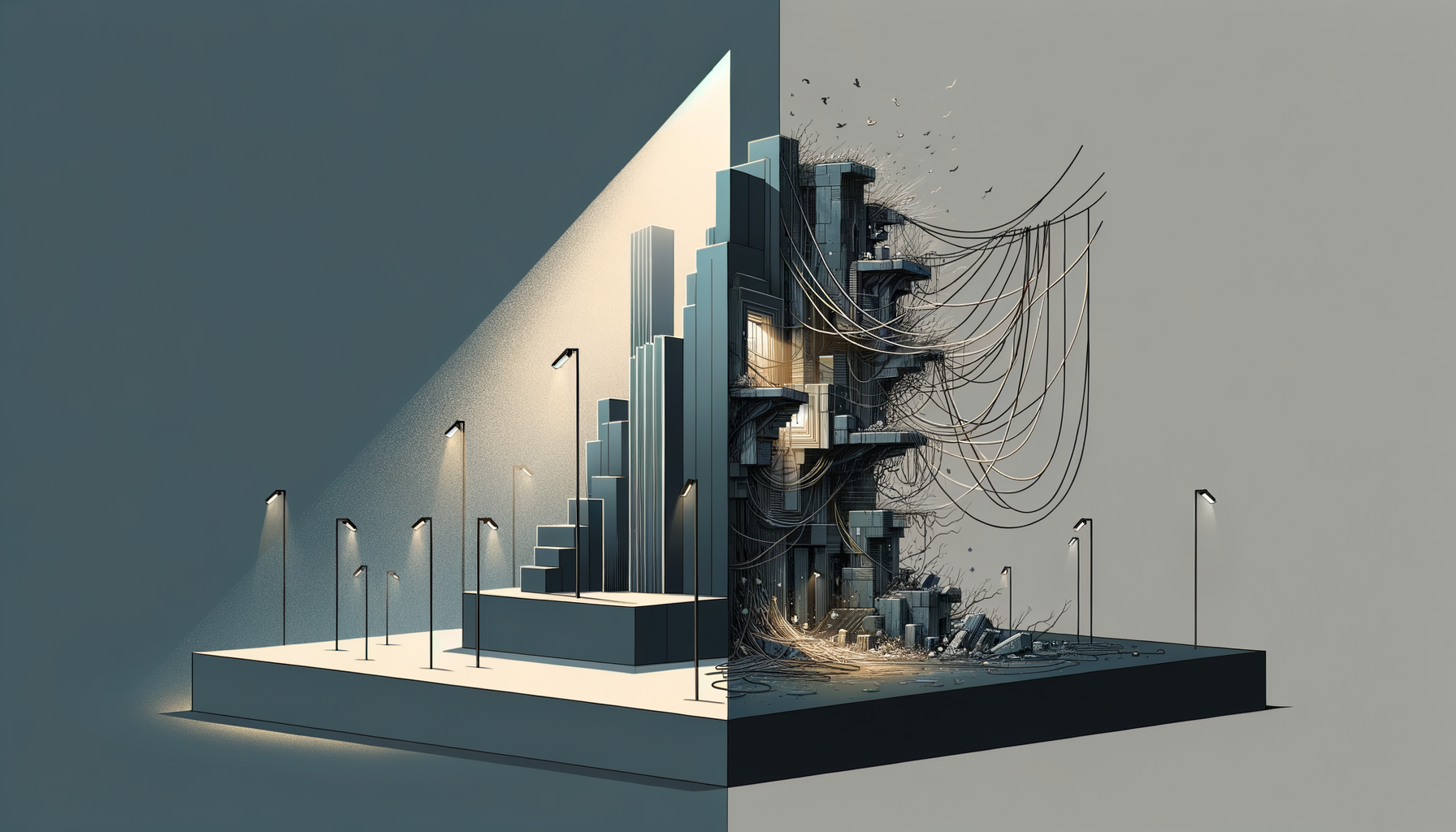

The demos look great. AI writes code faster than you can type and the result looks like a week's work in 45 minutes. The problem isn't the demo. The problem is thinking that's where software development ends.

The AI Coding Illusion - Why Vibe Coding Falls Apart After the Demo

The demos look great. You watch someone build a full app in 45 minutes with Cursor and Claude and you get this weird mix of excitement and dread. The AI writes code faster than I can type, catches bugs I'd miss, and the result looks like something that would've taken me a week.

This isn't like the other hype waves. RAD tools never actually wrote decent code. Low-code platforms never debugged your threading issues. But AI? It genuinely does what it says—writes a lot of code, quickly, that mostly works.

The problem isn't the demo. The problem is thinking that's where software development ends.

1. The Demo Always Works

There's a reason every demo looks good.

The demo is a controlled environment. The data is clean, the paths are happy, the edge cases haven't shown up yet, and nobody's had time to misuse the thing. When you watch someone ship a polished app in 45 minutes with an AI coding tool, you're watching a performance—not a preview of what maintaining that system looks like in 18 months.

I've been building systems for 30 years. Same pattern with every wave of new tools. The demo always works. The demo is never what kills you.

What kills you is six months later, when your thing has to work with everything else that already exists—and the person who built it can't explain what half of it does.

2. Production Isn't a Clean Problem

Production systems aren't clean problems. They're 15 years of decisions made by people who left the company, documented in comments nobody reads anymore, connected to a payment processor that had some weird quirk in 2017 that someone wrote a 40-line workaround for.

There's a cron job that should've been a proper service. A feature flag that became permanent. A bug you "fixed" by depending on some ORM (object-relational mapper—the layer that translates between your code and the database) behavior you really hope never changes.

The code remembers things. It carries all that history.

When you drop AI-generated code into that environment, you don't just replace the logic. You lose all the stuff the system learned to handle over time—the edge cases it adapted to, the assumptions baked in from two platform migrations ago, the workarounds nobody documented because the developer who wrote them thought they were temporary.

3. What AI Can't See

AI coding tools see the code you show them.

They don't see the support ticket from 2019 where a client's edge case almost broke billing. They don't see the 11pm fix that went in on a Tuesday, or the comment that says "don't touch this." They don't know about the meeting where the team picked the uglier solution because the clean one would've broken an API that one big customer depends on.

They see surface-level code and rebuild it—clean, fast, and wrong in ways that won't show up for months.

The most dangerous thing about AI-generated code isn't that it's bad. It's that it looks right. It passes tests, matches the spec, and handles the happy path cleanly. What it doesn't carry is the invisible institutional knowledge that accumulated in the original system over years of real use.

4. The Code Carries History

Software isn't just logic. It's accumulated decisions.

The function that does three things when it should do one? That happened because a deadline killed the refactor. The column with a misleading name? Renamed when the business pivoted in 2016, but the data migration was expensive so they kept the old name in the schema. The retry limit set to 7 instead of 3? Because in 2018 a downstream system started responding slowly during peak hours and 3 retries wasn't enough.

None of this is in a README. It's in the code itself, compressed into choices that look arbitrary until you know the history behind them.

When you hand that codebase to an AI and say "modernize this," what comes back is architecturally cleaner and historically empty. It handles the cases you described. It doesn't handle the ones you forgot to mention—because you forgot they existed.

That's the trap. And it doesn't reveal itself on launch day.

5. How It Falls Apart

In 2024, a startup decided to modernize their billing service with AI. Two engineers and a model rebuilt what had taken the original team six months, in two weeks. Tests passed. Benchmarks looked good. The API appeared compatible. Everyone thought they'd just saved a quarter off the roadmap.

Six months later, new compliance requirements hit. They needed audit logs on every transaction. Should've been a one-week change.

It wasn't.

Nobody could answer basic questions. Where are all the side effects? Which calls trigger other systems? What happens if this fails halfway through? The AI had inlined assumptions everywhere. A one-week change turned into six weeks of archaeology. The original engineers were gone. The AI had no memory of what it built. Git history was just "apply suggestion" and "refactor" commits.

The speed they'd celebrated at launch got eaten entirely, over $200K in emergency consulting time to untangle what the AI had woven together. You can't debug what you don't understand. And you can't understand code that was never really explained to a human.

6. The Hidden Cost

That's the real cost—not in the first month, but the first time you need to change something non-trivial and the system fights back.

The speed you gained up front gets eaten by confusion and rework, usually at the worst possible moment—under deadline pressure, with compliance breathing down your neck, and the engineer who built it long gone.

This is where the vibe coding disaster is heading. Not the first deployment—that usually works fine. It's the third iteration, when the AI-generated codebase has its own invisible complexity and nobody on your team actually understands what any of it does.

7. AI as a Tool, Not Magic

I'm not anti-AI at ALL. I use it daily. It makes me faster at a lot of small things: writing tests I was going to write anyway, scaffolding boring glue code, getting first drafts of stuff I'll clean up later.

But the people selling AI coding are selling you the writing phase. They're not showing you maintaining, modifying, explaining to new engineers, or changing core behavior under load without breaking production.

That's where software actually lives. And that's where experience beats raw speed—30 years of watching codebases age teaches you things about which shortcuts come back to bite you, what "clean" code is actually hiding, the difference between code that works and code that can change.

AI doesn't see any of that history. It just sees text.

8. How to Use AI Without Building a Time Bomb

None of this means stop using AI. It means stop using it as a replacement for understanding.

Use it on things you'd write anyway. Boilerplate, scaffolding, test stubs, documentation. Let it accelerate the boring parts while you own the decisions.

Read what it gives you before you ship it. If you can't explain why the code does what it does, you're not ready to ship it. That's not the AI's failure—it's a signal that you don't understand the system yet.

Don't let it touch institutional history without a human translating first. If you're modernizing a legacy system, the first job is documenting what the old system actually does—not what the spec says, what it actually does. That documentation is what you hand the AI, not the codebase.

Keep git history meaningful. "Apply suggestion" and "refactor" aren't commits—they're confessions. When something breaks 18 months from now, you need to understand what changed and why. Commit messages are institutional memory too.

Test the edge cases, not just the happy path. The happy path always works. What's the behavior when a request fails halfway through? When the downstream system is slow? When the data is malformed? Those are the cases AI-generated code handles with assumptions, not understanding.

Have someone with end-to-end knowledge review the seams. Vibe coding fails hardest at integration points—where your new system touches something old, external, or human. If you don't have that expertise in-house, borrow it before you ship, not after.

9. The Coming Reality Check

The reckoning isn't coming because AI is bad—it's genuinely impressive technology. It's coming because everyone's treating the wow-demo like it's the whole story.

That demo is roughly 5% of what happens to code in production—the initial write, on clean data, with no users, no edge cases, no compliance requirements, and no staff turnover. The other 95% is everything that happens after the code meets the real world: real users doing unexpected things, real compliance audits, real incidents at 2am, real engineers who weren't there when it was built trying to change it without breaking it.

Every engineer who's shipped systems and kept them running knows this. The pressure to ship fast is real. The cost of shipping fast without understanding is also real—it just shows up later, when you can least afford it.

10. Who Fixes This

When the AI-generated codebase hits the wall—and enough of them will—someone has to fix it.

That someone understands how systems actually work together. How the frontend talks to the backend, how the backend talks to the database, how auth and caching and failure modes interact. They understand integration and legacy constraints, the difference between a system that works and a system that can change, what "clean" code is often hiding underneath.

This is what experience actually buys you. Not speed—judgment. The ability to look at code that passes tests and still know it's fragile. The instinct for which shortcuts come back to bite you. The pattern recognition that comes from watching codebases age over years, not weeks.

AI is a powerful tool. In the hands of someone who understands systems end-to-end, it's a force multiplier. In the hands of someone who doesn't, it's a faster way to dig a deeper hole.

The question isn't whether to use AI. It's whether you understand what you're building well enough that when it breaks, you know where to start.

If you've already run into one of these walls with AI-generated code, I want to hear about it.

Context → Decision → Outcome → Metric

- Context: PE-backed portfolio company brought in a senior engineer with strong AI and backend credentials to modernize a core integration layer. Six-week build. Clean demo. Client thrilled. Brought in afterward to assess the system before it connected to a 20-year-old ERP platform running the client's core operations.

- Decision: Ran a full integration audit before go-live—mapped every assumption in the AI-generated broker layer against actual ERP data flows, edge cases, and undocumented exceptions in the legacy system.

- Outcome: Found multiple silent failure modes and subtle data corruption risks that wouldn't have surfaced until production. System required substantial rework before it could safely connect to live data.

- Metric: Six weeks of AI-assisted build time. Four weeks of remediation before production clearance. Estimated rollback cost if issues had surfaced under live conditions: significant dollars in downtime, data reconciliation, and re-engineering.

Anecdote: The Billing Service That Looked Done

In 2025, I reviewed a billing modernization project for a mid-size SaaS company.

Two engineers and an AI coding tool had rebuilt a billing service that had taken the original team six months to build. Two weeks. Tests passed. API compatibility confirmed. Everyone was proud of it, and honestly, the code was clean—well-structured, readable, genuinely impressive for two weeks of work.

I was asked to look at it before it went live because one of the investors had seen a similar situation go sideways at another portfolio company.

What it didn't have was any of the institutional knowledge from the original system. Six years of edge cases—partial payment handling, retry logic tuned for a specific payment processor that had been flaky in 2021, a grace period calculation that had been adjusted three times based on actual churn data—none of it was there. The AI hadn't been told about any of it. Nobody had thought to tell it, because nobody had sat down and documented what the old system had learned.

Before it went live, a new compliance requirement surfaced that needed audit logging on every transaction. In the original system, that would've been a two-day change. In the new one, it took three weeks because the side effects were scattered and nobody could confidently say what else a change would touch.

The "fast" version ended up costing them an extra month of engineering time just to reach feature parity with what they'd replaced—before accounting for the edge cases still waiting to surface in production.

The speed didn't disappear. It just got deferred, with interest.

Mini Checklist: Shipping AI-Assisted Code Without Landmines

- [ ] Can you explain, in plain English, why any given function does what it does—not just that it passes tests?

- [ ] Is your git history meaningful? Could someone six months from now understand what changed and why from the commit log?

- [ ] Have you documented what the existing system actually does (not what the spec says) before handing it to AI for modernization?

- [ ] Have you tested failure modes—partial failures, slow dependencies, malformed inputs—not just the happy path?

- [ ] Does someone with end-to-end knowledge own the integration points, or were those AI-generated too?

- [ ] Is there a human who can answer "what happens when this fails at 2am?" without reading code for 20 minutes first?

- [ ] Have you given new engineers enough context that they could change something non-trivial without breaking production?

- [ ] Did you budget time to turn prototype-quality code into production-quality code—or did you ship the prototype?

- [ ] Do you know where the edge cases from the old system went in the new one, or are you assuming the AI figured them out?

- [ ] If the engineer who built this left tomorrow, could the team maintain it?